10 AI chatbot Best practices for DTC (2026)

Nearly 70% of shoppers who add something to their cart leave without buying (glued). Some were never serious. But a lot of them had a question, needed a fast answer, and moved on when one did not come.

That is the actual problem AI chatbots solve in DTC, when built correctly. A specific shopper, a specific moment of hesitation, an answer that arrives in time and closes the gap.

The brands getting real returns from AI chatbots did something deliberate. They identified exactly where shoppers lose confidence during the purchase journey, built around those specific moments, and kept improving after go-live. That is a narrower brief than most teams start with, and it is the reason the results differ so much.

The ones still waiting for their chatbot to perform are usually running something trained on outdated FAQs, disconnected from live order data, with no clear owner after launch. The technology is capable. The implementation is where the gap lives.

What follows is what getting it right actually looks like in practice.

Why AI Chatbots Are Becoming Essential for DTC Brands in 2026

Direct-to-consumer brands do not operate in stable conditions. Traffic spikes around product launches. Flash sales compress weeks of demand into hours. A carrier delay can generate thousands of tracking questions in a single day. Support volume does not grow gradually. It surges.

At the same time, revenue is often lost in small moments. A shopper hesitates at checkout because delivery timing is unclear. A sizing detail is buried in a product page. A discount code fails without explanation. These are not major objections. They are unanswered questions. When no one responds in time, the sale disappears.

Expectations have also changed. Customers assume brands will recognize them, understand what they purchased before, and respond with relevant guidance. A generic reply feels careless. A slow reply feels worse. Loyalty in DTC is fragile, and the experience matters as much as the product.

Support teams are under pressure from both sides. They are expected to respond instantly while handling growing complexity in subscriptions, bundles, exchanges, and policy variations. Yet most of their time is still spent answering repetitive questions about order status, returns, and shipping rules.

This is where AI chatbots have become practical infrastructure rather than optional tools. They absorb predictable, high-volume requests and resolve them immediately. That frees human agents to focus on exceptions, emotional situations, and high-value edge cases that require judgment.

For DTC brands in 2026, the model is straightforward. Automation handles what is repetitive and rules-based. Humans handle what is nuanced and sensitive. The system is designed to reduce friction at key moments in the buying and post-purchase journey, not to block access behind scripted flows.

When built around real ecommerce workflows and live operational data, chatbots shift from being a support add-on to becoming part of how the brand grows.

10 Best Practices for AI Chatbots in DTC Ecommerce (2026)

AI chatbots have become one of the most consequential tools a direct-to-consumer brand can deploy, and also one of the most commonly wasted. The difference between a chatbot that generates revenue and one that frustrates customers into leaving rarely comes down to which platform was chosen. It comes down to how the chatbot was built, what systems it is connected to, and whether the team behind it treats it as infrastructure or as a feature they shipped once and moved on from.

This guide covers ten best practices for DTC brands implementing or improving AI chatbots in 2026, from personalization and channel consistency to escalation design, mobile experience, and the specific ways bots fail when they are disconnected from live operational data.

1. Personalize With Real Customer Data, Not Assumptions

One of the most common AI chatbot mistakes in ecommerce is treating personalization as a feature rather than a data problem. Inserting a first name or recommending the last viewed product is not personalization. It is pattern-matching that customers see through immediately.

Effective personalization for DTC chatbots means the bot knows this specific customer bought the starter kit six weeks ago and is due for a refill. It means recognizing that someone who spent eight minutes on the size guide is not browsing casually. A returning subscriber should get a different response than a first-time visitor asking the same question about the same product.

Purchase history, subscription status, cart contents, and browsing behavior are the data inputs that make genuine personalization possible. Without them, the bot is guessing. The metric that reveals whether personalization is working: conversion rate after chatbot interaction versus sessions with no chatbot involvement. If there is no meaningful gap, the data layer is not doing its job.

2. Maintain One Customer Identity Across Every Channel

DTC shoppers move across channels constantly. A shopper might discover a product on TikTok, ask a sizing question on Instagram DMs, browse on desktop, and complete checkout on their phone three days later. That is a normal purchase path in 2026. Most chatbots treat each of those touchpoints as a separate session from a stranger.

When customer identity does not carry across channels, every interaction resets. Context is lost. The shopper repeats themselves. Recommendations become generic. The intent that was building across multiple sessions disappears.

A well-built DTC chatbot system maintains one synchronized identity across web chat, WhatsApp, Instagram, and email. The conversation that started on one channel continues on the next without the customer noticing any seam. During product drops and flash sales where timing affects conversion, this continuity matters more than almost any other design decision.

A practical signal that this is broken: conversations that restart with context the customer already provided in a previous session on a different channel.

3. Use Proactive Chat Only to Resolve a Specific Uncertainty

Proactive chat is one of the most misused features in DTC ecommerce chatbot deployments. Triggering a message the moment a visitor lands on any page does not help them. It interrupts them before they have formed a question, and they dismiss it and move on.

The moments where proactive chat measurably improves conversion are specific. A shopper who has been on the delivery information section for longer than expected likely has a question about arrival timing. A shopper whose promo code just returned an error needs an explanation and an alternative, not a restated error message. A shopper who has spent several minutes on a size guide needs help making a decision.

Before setting any proactive chat trigger, define the specific uncertainty it resolves. If that answer is vague, the trigger creates noise rather than value. Measure conversion rates in sessions with proactive engagement against sessions without it to confirm the timing is right.

4. Design Escalation as a Seamless Transition, Not a Dead End

Poor escalation design is one of the most damaging AI chatbot problems in ecommerce customer service. When a shopper finally requests a human agent and that agent opens with “Can you describe your issue?”, the brand has just confirmed that nothing shared with the bot was retained.

Every detail matters in that moment: the order number, the nature of the complaint, how long the customer has been trying to resolve it. A properly designed escalation passes the full conversation thread, relevant order details, and a clear summary of why the handoff happened before the agent joins the conversation.

Track customer satisfaction scores specifically after escalation events, separately from overall chatbot satisfaction. If that number is consistently lower, context is being lost in the transfer. That is a fixable technical and process problem, not an inherent limitation of automation.

5. Connect the Chatbot to Live Operational Data

A chatbot that cannot access real-time order status, live inventory, return eligibility, and subscription data is not an AI agent. It is a FAQ page with a chat interface. The gap between those two things is where most DTC chatbot implementations quietly fail.

A bot trained on static policy documents can tell a customer delivery takes three to five business days. It cannot tell them their specific order has a carrier delay. The first answer is technically accurate. The second is what the customer actually needed to know.

When the chatbot is connected to live systems, it can resolve real questions: whether a specific variant is in stock, whether an order placed 32 days ago qualifies for return under a 30-day policy, whether a subscription is active or already cancelled. That capability is what separates a chatbot that reduces support volume from one that generates it.

6. Treat the Knowledge Base as a Live System

DTC product catalogs change constantly. Promotions expire. Shipping carriers update their policies. Return windows get adjusted. A chatbot trained on content from last quarter is already giving incorrect answers, and in most cases the team does not know until customers start escalating or complaining publicly.

Incorrect answers in ecommerce chatbots do more damage than no answer at all. A confidently wrong response about a return policy, a reformulated ingredient, or an expired discount code does not just frustrate the customer in that moment. It damages their trust in the brand’s reliability overall.

Establish a weekly review process for failed conversations and escalation reasons. The patterns that emerge are a direct map of where the knowledge base has fallen behind. Address the highest-frequency gaps first and track whether escalation rates for those specific intents decline in the following weeks.

7. Build the Mobile Experience Separately

Mobile cart abandonment rates consistently exceed desktop abandonment, and the difference is not explained by purchase intent. Mobile shoppers want to buy. They abandon because the experience creates friction that desktop does not.

Chat interfaces that work cleanly on a 15-inch screen often create real problems on a phone: response text that requires scrolling, buttons too small to tap reliably, multi-step flows that interrupt momentum, and product cards that load slowly over a mobile connection. None of these are acceptable in a surface where most DTC purchase decisions are made.

The mobile chatbot experience should be designed as its own product, not adapted from desktop. Fast response rendering, large tap targets, single-action flows for common requests like order tracking and return initiation, and visual content that loads without delay. The success metric is not whether the mobile experience technically functions. It is whether mobile completion rates match desktop completion rates.

8. Measure Chatbot Performance by Intent, Not by Aggregate

Aggregate chatbot metrics are among the most misleading numbers in ecommerce analytics. An overall resolution rate that looks acceptable can hide specific intent categories that are failing entirely, cancelling out the categories that perform well.

Order tracking might resolve at 90%. Product questions about a newly launched collection might resolve at 35% because the knowledge base was not updated before launch. Subscription pause requests might be failing completely because the integration to the subscription platform was not finished before go-live. The average of those three numbers looks manageable. The customers who could not pause their subscription and cancelled instead are not visible in that average.

Break resolution rate down by specific intent type and track each one week over week. That view shows exactly where improvements are needed and confirms whether changes made are actually working.

9. Be Transparent That It Is a Bot

Customers who know they are interacting with an AI and still find it genuinely useful trust the brand more than customers who later realize they were misled. Transparency does not reduce chatbot effectiveness. The evidence consistently points the other way.

Beyond customer trust, there is a practical risk dimension. A chatbot that confidently misrepresents a return policy, a promotional condition, or a product claim creates a liability. Brands have had to honor incorrect commitments made by their own bots. Label the chatbot clearly as an AI assistant. Provide a visible path to a human agent at every stage of the conversation, not only after frustration has already built. Screenshots of chatbot failures circulate quickly. What happens in a chat window is effectively public.

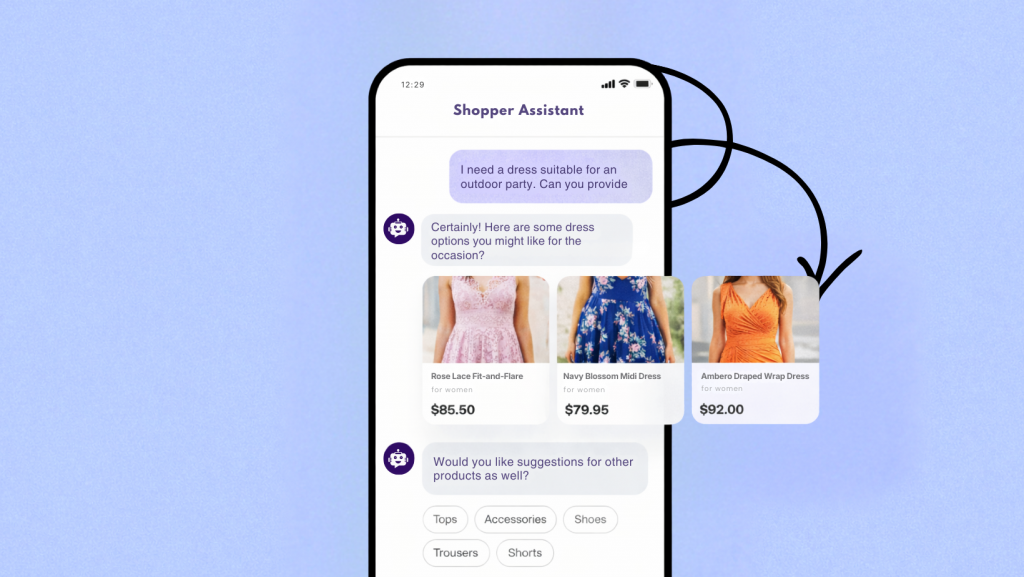

10. Use Visual Content to Answer Questions Text Cannot

A significant share of DTC product returns trace back to purchase decisions made without enough information, not to product dissatisfaction. A customer who orders the wrong size because the fit was unclear, or returns a home item because the dimensions were not visualized well, could have been retained with better guidance before checkout.

Text descriptions of sizing, fit, or product details rarely resolve the underlying question, which is not “what are the specifications” but “will this work for me specifically.” Size guide comparison cards, images showing fit across different body types, and short demonstration videos answer that question in a way that text cannot.

Deliver visual content inside the chat conversation rather than redirecting customers to a separate page. Every navigation away from the checkout path introduces a drop-off risk. Keeping the decision-making process inside a single conversation increases the likelihood it reaches completion.

DTC AI Chatbot FAQ: Support, Sales, and Retention

How quickly can a DTC brand launch a useful AI chatbot? +

Most direct-to-consumer brands can ship a helpful bot in 2 to 4 weeks, if they start with high-value use cases. A lean first release focuses on order status, return workflows, and common product questions, which often covers most customer requests from day one.

Will customers use the chatbot instead of opening support tickets or emailing? +

They usually will when the answer is immediate and accurate. If the bot gives a real solution in seconds, people prefer it over long waiting times. If not, they leave quickly.

Can a DTC AI chatbot help grow revenue, or is it only for support? +

It does both. A mature DTC chatbot can reduce friction in buying moments, recover abandoned carts, guide upsells, and answer objections fast. In practice, it becomes a support assistant, shopping assistant, and conversion assistant in one interface.

What features should a DTC chatbot include on day one? +

Start with the basics that actually move the needle: order tracking links, shipping timelines, returns and exchange steps, sizing and stock checks, and one-click human handoff for complex cases. This gives shoppers confidence quickly and saves support time right away.

How much customer support can a DTC chatbot realistically automate? +

Most teams automate 60 to 80 percent of repetitive questions. Policy details, order updates, payment and shipping FAQs, and basic product help are usually the highest volume topics and easiest to automate accurately.

Do AI chatbots work well on mobile shopping journeys? +

Only if they are built mobile-first. Most DTC traffic comes from phones, so your bot should load fast, show short quick replies, and avoid forcing deep scrolling. If it feels natural in one hand, it will be used more.

Which channels should DTC brands activate first? +

Begin with website chat so support is available right at purchase intent, then expand to social and messaging channels. For most ecommerce brands, website plus one outbound support-heavy channel, like WhatsApp or Instagram, gives the best ROI in the first phase.

How do you keep AI chatbot answers accurate when products change often? +

Use one centralized knowledge base and connect it to your product, pricing, and policy systems. Update that source whenever anything changes, and the chatbot reflects it everywhere. No separate copy updates across channels. This is where most gains in accuracy come from.

Can AI support bots damage trust or brand experience? +

No, if you set expectations correctly. Tell users clearly when they are chatting with AI, keep the tone consistent with the brand, and make escalation visible. Customers value speed and honesty, and that combination builds confidence.

How do you measure whether a DTC AI chatbot is actually performing? +

Track three layers. First, support outcomes: bot resolution rate and average first-response time. Second, commercial impact: cart recovery, browse-to-checkout completion, and conversion lift. Third, quality signals: sentiment, CSAT, and repeat use. Together these show if it is helping the business, not just opening chats.

Conclusion

DTC brands in 2026 are competing on speed and reliability as much as product quality. Shoppers will move on fast when they cannot get a clear answer about shipping, returns, sizing, or checkout friction. That is why DTC AI chatbots matter most in moments of hesitation, not in marketing copy.

A useful chatbot is not a static feature. It is support infrastructure. It handles repetitive, high-volume questions with live data, while human agents focus on nuanced or emotional cases. The real impact comes from reducing friction in real time, and from connecting your chatbot to the systems your shoppers already use.

So if you want AI that actually helps your store, optimize for execution: keep one live knowledge source, maintain channel context, design strong human handoff, and measure by intent-level outcomes. Those decisions create real results in conversion, support efficiency, and customer trust.

If you want to put this framework into production, YourGPT can help you centralize customer support, sales and workflow automation, launch the right high-value intents first, and improve continuously from real customer conversations.